LLMs are almost as good as bespoke clinical diagnosis systems

Reading: Dedicated AI Expert System vs Generative AI With Large Language Model for Clinical Diagnoses, JAMA Network Open, 29 May 2025.

I’d assume an expert system for clinical diagnosis, developed over 40 years, would perform better than an off-the-shelf consumer LLM, like ChatGPT. It does! But only just, and the difference is not statistically significant.

What they compared

An expert system is a knowledge base plus logical rules. The idea is to codify the knowledge of an expert, sometimes as IF…THEN rules.

This study compared an expert system, DXplain, against ChatGPT and Gemini. They used 36 clinical cases, and had the systems come up with diagnoses. Scoring is based on where the correct diagnosis was positioned in the results. It was 5 points if it was in the first five outputs, 4 points beyond that, and so on down to 1 point.

More details on the results in a moment. But first a note on the effort involved in using these systems.

Differences in effort

For the LLMs, there was a short prompt (“…Given the following scenario, what would be the diagnoses you would consider, in rank order from most likely to least likely…”). Added to that was a scenario and lab results.

Compare that to the expert system (diagnostic decision support systems, DDSS):

DDSS requires a user to enter findings from a controlled vocabulary by searching its dictionary, assisted by its use of common lexical techniques such as key word matching with stemming. Consequently, for the purposes of this study, individual findings from each case had to be extracted and then mapped to the DDSS’s clinical vocabulary. For the extraction task, 6 clinicians were recruited.

That’s sounds like a lot more work. You can see what it looks like, and listen to the clickety-clack of the keyboard, in this very nice DXplain demo video.

But what about the results?

There were various experiments, but the key findings are:

- Everybody did well: “In this diagnostic study comparing the performance of a traditional DDSS and current LLMs on unpublished clinical cases, in most cases, every system listed the case diagnosis in their top 25 diagnoses if laboratory test results were included.”

- “Statistical significance was not reached in any of the comparisons”

- But the expert system did perform better.

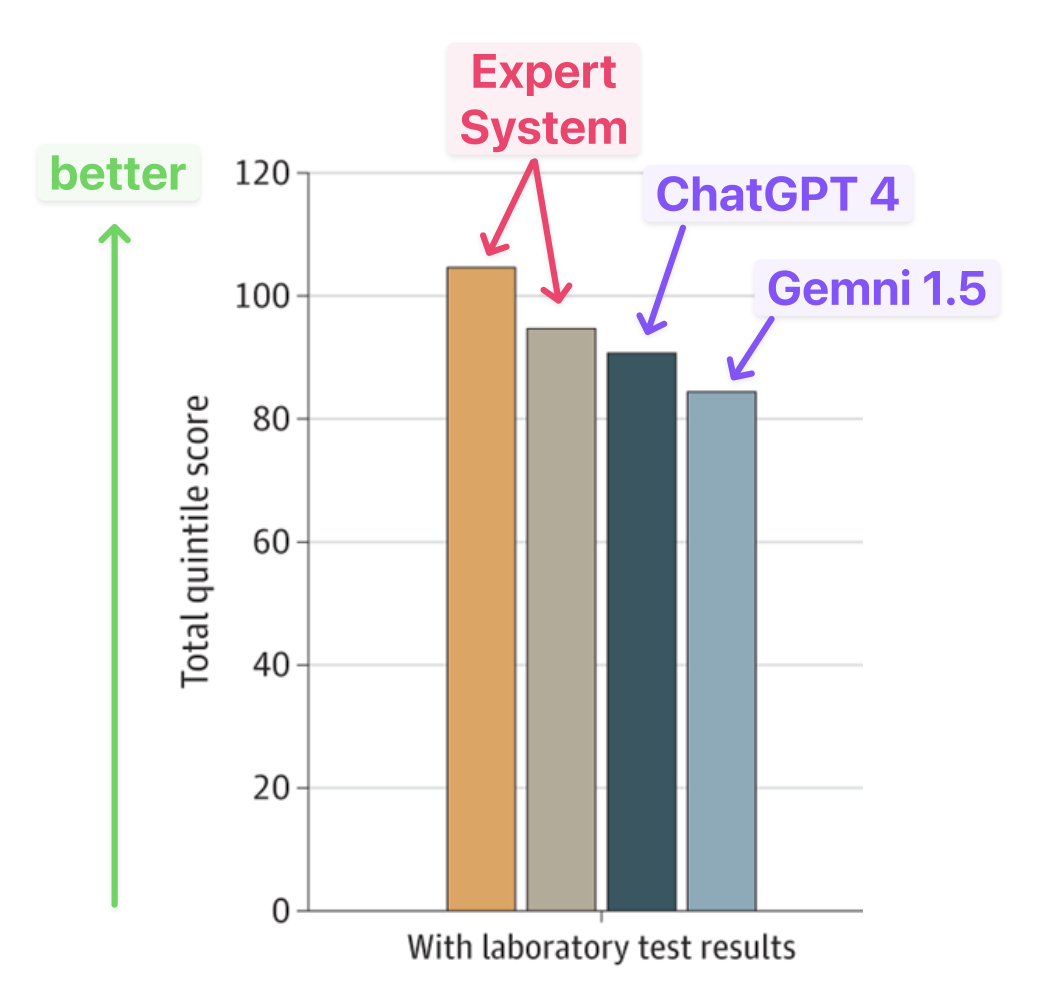

It was close, as shown in the main results:

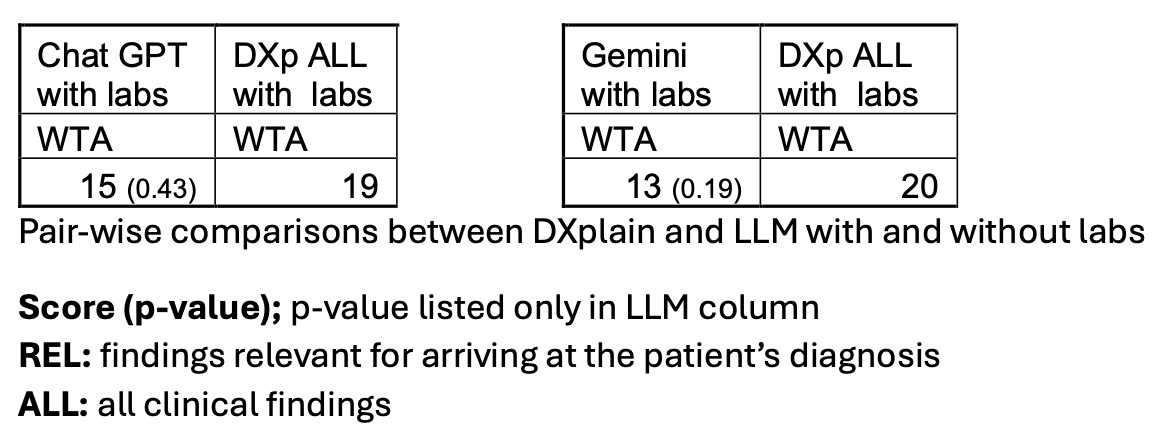

The study also looked at a “winner takes all” comparison. That’s each model going head-to-head, and whichever ranks the correct diagnosis higher is the winner:

In other words, ChatGPT got the answer ranked higher 15/34 results, and the expert system ranked the correct answer higher 19/34 times.

Which is really “better”?

It’s a small set of case studies, but the expert system does better. However, aside from those results, there are other notable differences:

- DXplain and its ilk are restricted access, mainly used in training and education from what I can tell;

- You can use ChatGPT right now, for free.

And which do you prefer?

- DXplain uses logic and can give you an explanation (although you need clinical knowledge to use the expert system, and make sense of the explanations);

- LLMs are pattern matching.

The best of both worlds might be possible:

[…] we have done preliminary work that suggests that an LLM can be used to reliably extract and map clinical findings from narrative text for input into the DDSS. We are excited about combining the new generation of AI tools with existing systems to better automate diagnostic decision support because even the most effective tool would not be beneficial if it is not used.

More of that, but it’s difficult to do while the logic-based systems are walled off.