Making LLMs safer for health care support

This is a hard, perhaps impossible, problem to fix as it stands. Limbic are having a go a it.

Reading: The Limbic Layer: Transforming Large Language Models (LLMs) into Clinical Mental Health Experts, PsyArXive Pre-print, 26 August 2025.

Limbic have a Class IIa medical device for psychological assessment, and are also looking into supporting mental health via CBT exercises delivered with an LLM involved.

That doesn’t sound like a great idea, but they way they’ve gone about it holds promise:

As Ross Harper, their CEO, put it in an interview: “you don’t want a language model, implicitly, making decisions. It’s not ready.”

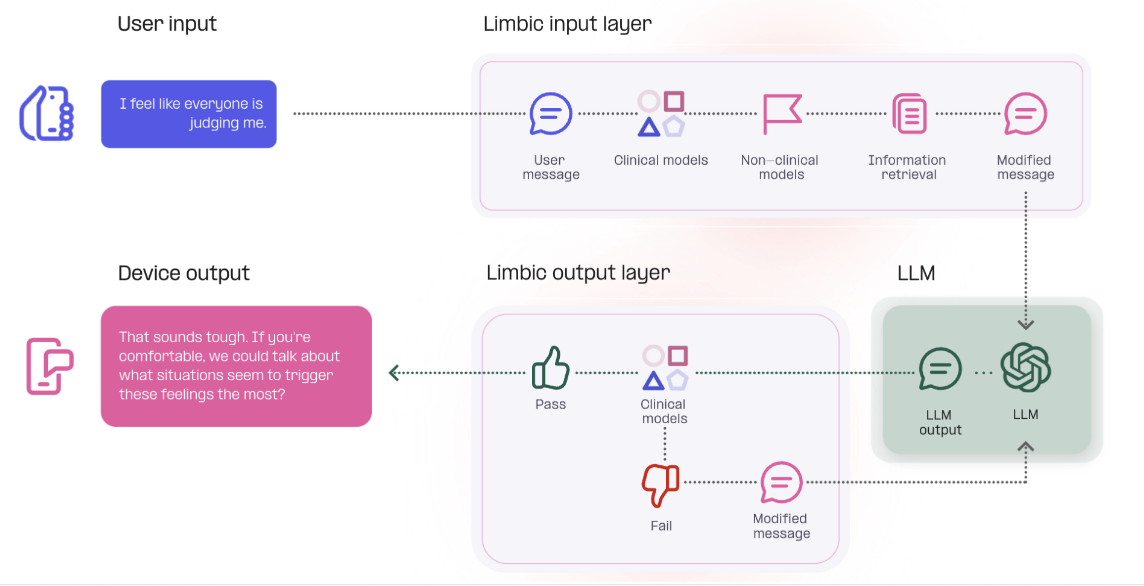

What you do instead is have decisions taken by a set of more traditional machine learning models, plus presumably some flagging around the content in and out. You let the LLM do what it’s good at: generating natural sounding conversation.

To show how hard this problem is consider that 17 CBT therapists assessed the output of the system and compared it to output from a standard prompted consumer LLM. Even with all that extra machinery, only 15 out of 17 therapists thought the Limbic system was less harmful than the LLM alone (15 out of 17 is great, but I wonder what the other 2 thought). So even sophisticated guard-rails don't get you 100% there.

It’d be wonderful to see more details around the clinical models, such as what they are, what they do.