Some GPs use LLMs today for write ups and diagnosis (but most don’t)

Reading: Generative artificial intelligence in primary care: an online survey of UK general practitioners, BMJ Health & Care Informatics, 17 September 2024.

It’s a survey of 1,000 GPs, so engage your worries about responder bias, and demographic differences (PDF) from the GP population.

But it’s great to see. A smart idea to just ask GPs what they are doing with this tech.

The survey found most GPs (who responded) are not using LLMs today. I was surprised as many as 20% are, given the risks of pasting personal data into standard ChatGPT:

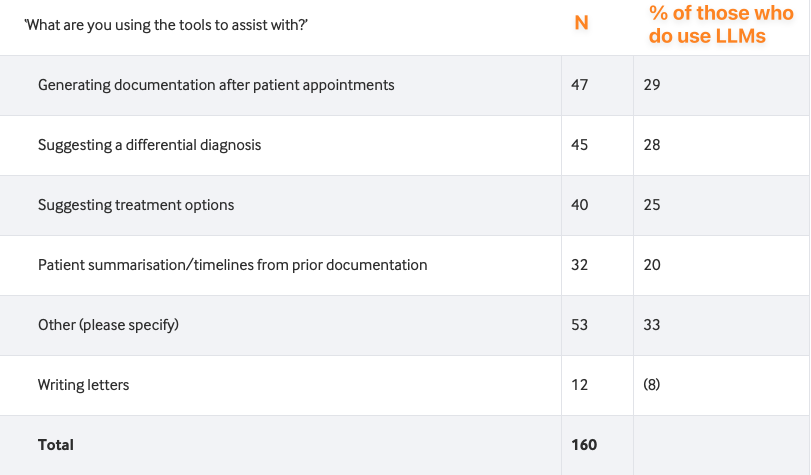

One in five of the UK GPs surveyed here reported using LLM-powered chatbots to assist with tasks in clinical practice; among them, ChatGPT was the most used tool. More than one in four participants who used generative AI reported using these tools to assist with writing documentation after patient appointments or with differential diagnosis.

The use cases are in table 1:

One of the authors, Charlotte R. Blease, was kind enough to let me know research and teaching (as well as letter writing) was part of the “Other” uses.

I’d love to know what the "Other” (uncommon?) LLMs that were used. There’s a follow up paper coming.

* * *

Update: the follow-up paper has been published, showing growth to 25%. There's a summary post from the author at"From Toy to Tool: How UK GPs Quietly Normalised Generative AI":

Generative AI in UK primary care has moved from taboo to tool in a single year — without oversight, training, or transparency. The question is no longer whether doctors should use AI. It’s how we make its use safe, accountable, and honest.